Hyperspectral camera reveals invisible details

The University of Washington and Microsoft Research are developing a hyperspectral camera that uses both visible and invisible near-infrared light to 'see' beneath surfaces and capture details invisible to the naked eye.

The team of computer science and electrical engineers have detailed a hardware solution that costs roughly $800, or potentially as little as $50 to add to a mobile phone camera. They also developed intelligent software that easily finds the 'hidden' differences between what the hyperspectral camera captures and what can be seen with the naked eye.

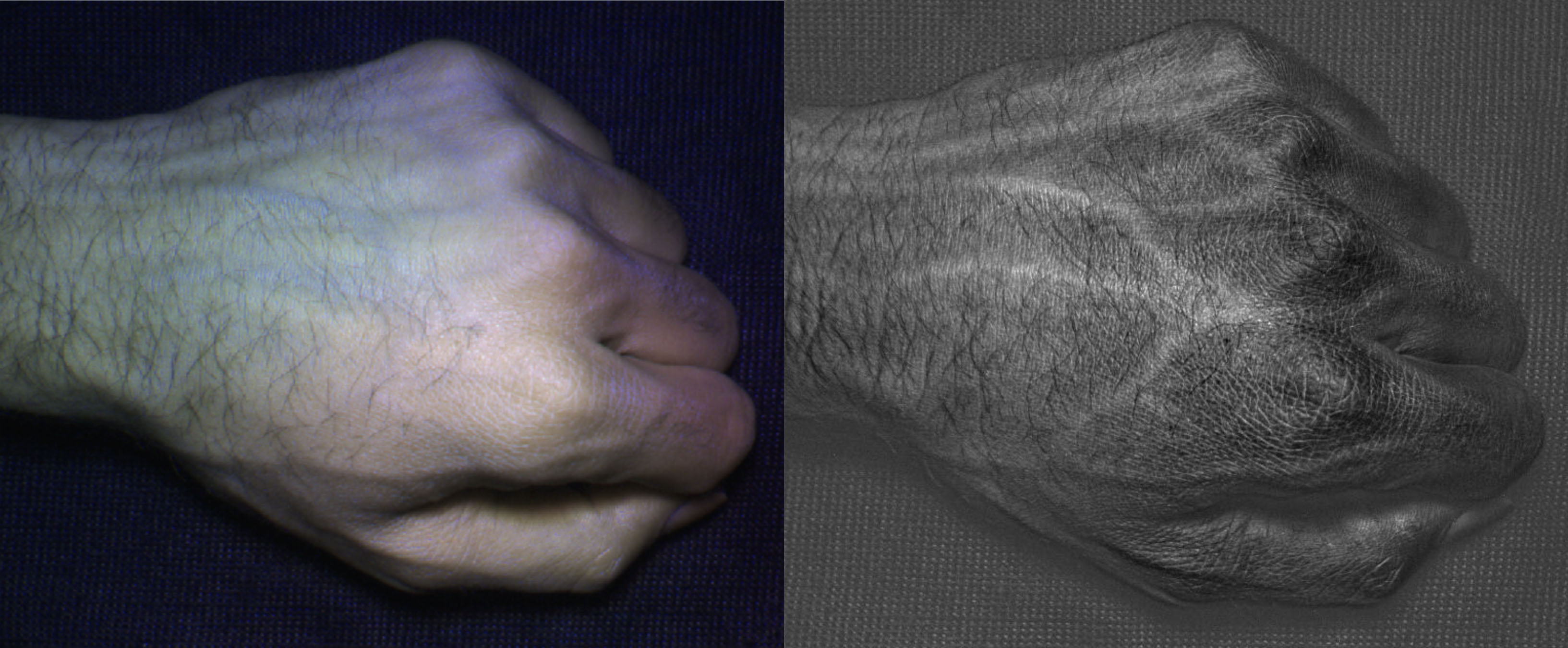

When HyperCam captured images of a person’s hand, for instance, they revealed detailed vein and skin texture patterns that are unique to that individual. That can aid in everything from gesture recognition to biometrics to distinguishing between two different people playing the same video game.

Compared to an image taken with a normal camera (left), HyperCam images (right) reveal detailed vein and skin texture patterns that are unique to each individual.

As a preliminary investigation of HyperCam's utility as a biometric tool, in a test of 25 different users, the system was able differentiate between hand images of users with 99% accuracy.

In another test, the team also took hyperspectral images of 10 different fruits, from strawberries to mangoes to avocados, over the course of a week. The HyperCam images predicted the relative ripeness of the fruits with 94% accuracy, compared with only 62% for a typical camera.

“It’s not there yet, but the way this hardware was built you can probably imagine putting it in a mobile phone,” said Shwetak Patel, Washington Research Foundation Endowed Professor of Computer Science & Engineering and Electrical Engineering at the UW. “With this kind of camera, you could go to the grocery store and know what produce to pick by looking underneath the skin and seeing if there’s anything wrong inside. It’s like having a food safety app in your pocket."

Hyperspectral imaging is used today in everything from satellite imaging and energy monitoring to infrastructure and food safety inspections, but the technology’s high cost has limited its use to industrial or commercial purposes. The UW and Microsoft Research team wanted to see if they could make a relatively simple and affordable hyperspectral camera for consumer uses.

HyperFrames taken with HyperCam predicted the relative ripeness of 10 different fruits with 94% accuracy, compared with only 62% for a typical (RGB) camera

“Existing systems are costly and hard to use, so we decided to create an inexpensive hyperspectral camera and explore these uses ourselves,” said Neel Joshi, a Microsoft researcher who worked on the project. “After building the camera we just started pointing it at everyday objects - really anything we could find in our homes and offices - and we were amazed at all the hidden information it revealed.”

A typical camera divides visible light into three bands (red, green and blue) and generates images using different combinations of those colours. But cameras that utilise other wavelengths in the EM spectrum can reveal invisible differences.

Near-infrared cameras, for instance, can reveal whether crops are healthy or a work of art is genuine. Thermal infrared cameras can visualise where heat is escaping from leaky windows or an overloaded electrical circuit.

“When you look at a scene with a naked eye or a normal camera, you’re mostly seeing colours. You can say, ‘Oh, that’s a pair of blue pants,'” said lead author Mayank Goel, a UW computer science and engineering doctoral student and Microsoft Research graduate fellow. “With a hyperspectral camera, you’re looking at the actual material that something is made of. You can see the difference between blue denim and blue cotton.”

HyperCam, which uses the visible and near-infrared parts of the EM spectrum, illuminates a scene with 17 different wavelengths and generates an image for each.

One challenge in hyperspectral imaging is sorting through the sheer volume of frames produced. The UW software analyses the images and finds ones that are most different from what the naked eye sees, essentially zeroing in on ones that the user is likely to find most revealing.

“It mines all the different possible images and compares it to what a normal camera or the human eye will see and tries to figure out what scenes look most different,” Goel said. One remaining challenge is that the technology doesn’t work particularly well in bright light, he added. Next research steps will include addressing that problem and making the camera small enough to be incorporated into mobile phones and other devices.

Co-authors include Eric Whitmire, Alex Mariakakis and the late Gaetano Borriello of the UW’s Department of Computer Science & Engineering and T. Scott Saponas, Neel Joshi, Dan Morris, Brian Guenter and Marcel Gavriliu at Microsoft Research.

The project was funded by Microsoft Research.