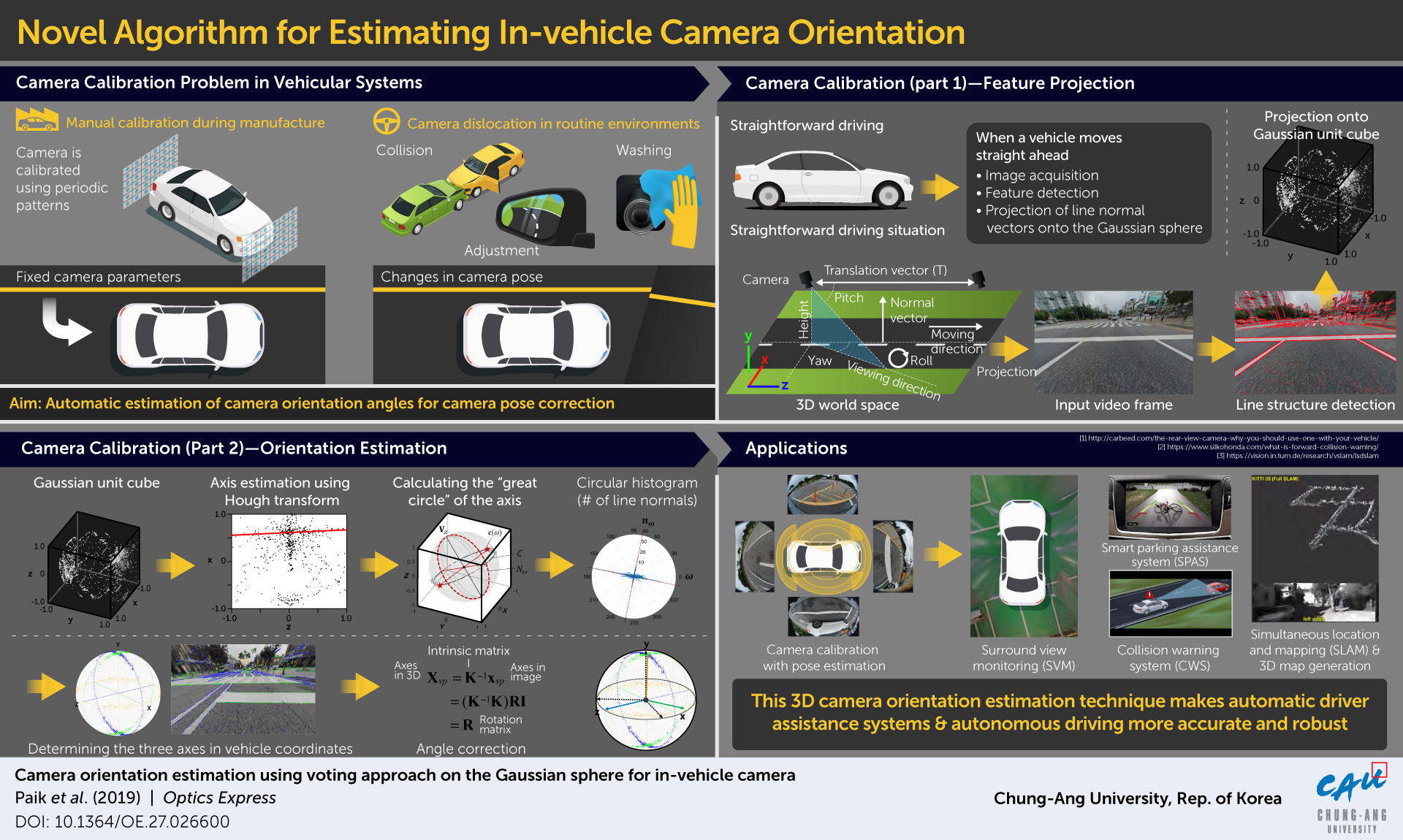

Camera calibration method for safer autonomous vehicles

Autonomous vehicles watch the roads before them using inbuilt cameras. Ensuring that accurate camera orientation is maintained during driving is, therefore, key to letting these vehicles out on roads. Taking us one step closer to realising autonomous driving systems, scientists from Korea have developed a highly accurate and efficient camera orientation estimation method that will enable such vehicles to navigate safely across distances.

Since their invention, vehicles have constantly advanced. As vehicular technology progresses, it seems that the roads of the near future will be occupied by autonomous driving systems. To move forward on the path to this future, scientists have developed camera and image sensing technologies that will allow these vehicles to reliably sense and visualise the surrounding environment.

While developing this technology, scientists have faced various challenges. One of the most important challenges has been the maintenance of the orientation of inbuilt cameras during straightforward driving; autonomous vehicles navigate and gauge distances using inbuilt cameras that image the world which they’re moving through. But these cameras often get dislocated during dynamic driving.

Prof. Joonki Paik from Chung-Ang University explained: “Camera calibration is of utmost importance for future vehicular systems, especially autonomous driving, because camera parameters, such as focal length, rotation angles, and translation vectors, are essential for analysing the 3D information in the real world.”

Methods of estimating the orientation of cameras mounted in vehicles have been constantly developed and advanced over the years by several groups of researchers. These methods have included computational approaches such as the voting algorithm, use of the Gaussian sphere, and application of deep learning and machine learning, among other techniques. However, none of these methods are fast enough to perform this estimation accurately during real time driving in real world conditions.

To remedy the problem of speed of estimation, a team of scientists from Chung-Ang University, led by Prof Paik, combined some of these previously developed approaches and proposed a novel more accurate and efficient algorithm, or method. Their method, published in Optics Express, is designed for cameras with fixed focus placed at the front of the vehicle and for straightforward driving. It involves three steps. First, the image of the environment in front is captured by the camera, and parallel lines on the objects in the image are mapped along the three cartesian axes.

These are then projected onto what is called the Gaussian sphere, and the plane normals to these parallel lines are extracted. Second, a technique called the Hough transform, which is a feature extraction technique, is applied to pinpoint “vanishing points” along the direction of driving (vanishing points are points at which parallel lines intersect in an image taken from a certain perspective, such as the sides of a railway track converging in the distance). Third, using a type of graph called the circular histogram, the vanishing points along the two remaining perpendicular cartesian planes are also identified.

Prof Paik’s team tested this method via an experiment on road under real driving conditions in a Manhattan world. They captured three driving environments in three videos and noted the accuracy and efficiency of the method for each. They found accurate and stable estimates in two cases. In case of the environment captured in one of the videos, the scientists witnessed poor performance of their method because there were many trees and bushes within the camera’s range of view.

But overall, the method performed well under realistic driving conditions. Dr Paik and team credit the high-speed estimation that their method can carry out to the fact that the 3D voting space is converted to a 2D plane at each step of the process.

What’s more, Prof Paik says that their method “can be immediately incorporated into automatic driver assistance systems (ADASs).” It could further be useful for a variety of alternative applications such as collision avoidance, parking assistance, and 3D map generation of the surrounding environment, thereby preventing accidents and promoting safer driving environments.

As far as advancement in research in the field is concerned, Dr Paik is hopeful about the potential of this method: “We are planning to extend this to smartphone applications like augmented reality and 3D reconstruction.”